Introduction

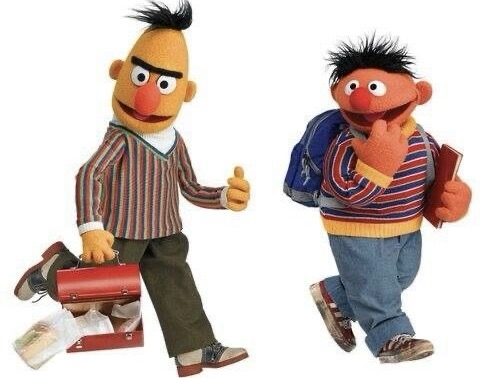

In the realm of Natural Language Processing (NLP), two formidable giants have emerged – GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers). Both have left indelible marks on the field, each offering distinct strengths and trade-offs.

In this blog post, we will begin with a comparison of GPT and BERT, considering embedding quality, accessibility, open-source availability, and the black-box nature of GPT models.

Section 1: The Battle of Embedding Quality

GPT’s High-Quality Embeddings

GPT models have earned acclaim for their remarkable embedding quality. Their ability to generate contextually rich and coherent text positions them as powerful assets for a wide array of NLP tasks, including text generation and question-answering.

BERT’s Free and Respectable Embeddings

BERT does not lag in embedding quality. It excels in comprehending the contextual nuances of words and sentences, proving highly effective in various NLP applications such as text classification and sentiment analysis.

Section 2: Accessibility and Cost

GPT’s Paid API

A significant distinction lies in accessibility. GPT models, particularly GPT-3, come with a cost, necessitating a paid API for access. While offering unparalleled capabilities, this requirement poses barriers for some businesses and developers.

BERT’s Free and Open-Source Model

In contrast, BERT is open-source and freely accessible. Its open-source nature invites a broad spectrum of users, levelling the playing field for those who do not wish to make financial investments.

Section 3: The Open-Source Advantage

Analyzing BERT’s Inner Workings

BERT’s open-source design empowers researchers and developers to go deep into its architecture, modify it, and seamlessly integrate it into their applications. This transparency fosters a collaborative environment, where continuous improvement and in-depth analysis are encouraged.

Section 4: The Black-Box Nature of GPT

The Mystery of GPT

GPT models, however, remain shrouded in mystery due to their black-box nature. The intricacies of their architecture and inner workings are not publicly disclosed, making it challenging to conduct comprehensive analyses or modifications.

Conclusion

GPT and BERT represent two NLP titans, each offering unique attributes and limitations. GPT boasts high-quality embeddings, while BERT provides accessible, open-source options. The choice between them depends on your specific needs, budget, and your desire for open-source transparency. This comparison highlights the dynamic landscape of NLP, catering to diverse users with distinct requirements.

Citations:

[A] OpenAI. (2019). “GPT-2: Language Models are Unsupervised Multitask Learners.”

[B] Devlin, J., Chang, M. W., Lee, K., & Toutanova, K. (2019). “BERT: Bidirectional Encoder Representations from Transformers.”

[C] OpenAI. (2021). “OpenAI Pricing.”

[D] GitHub. (2018). “Google Research BERT GitHub Repository.”

[E] TensorFlow Hub. (2018). “BERT Models.”

Note:-

Some examples of BERT-based models:

BERT: The original BERT model, developed by Google, is a pre-trained model that understands the context of words by training on a massive corpus of text.

RoBERTa: Developed by Facebook AI, RoBERTa builds upon BERT’s architecture and training techniques. It utilizes a larger amount of data and longer training times, leading to improved performance.

ALBERT: A Lite BERT developed by Google, ALBERT reduces the number of parameters while maintaining performance. This model is more memory-efficient.

DistilBERT: Developed by Hugging Face, DistilBERT is a distilled version of BERT that is smaller and faster while retaining most of BERT’s performance. It’s designed for resource-constrained applications.

BioBERT: This model is specifically fine-tuned for biomedical text. It’s pre-trained on biomedical literature and clinical notes, making it highly useful for healthcare-related NLP tasks.

SciBERT: Similar to BioBERT but focused on scientific text, it’s pre-trained on a large corpus of scientific literature and is valuable for tasks in the scientific domain.

ClinicalBERT: Tailored for clinical notes, ClinicalBERT focuses on the medical field, allowing it to understand the specific terminology and context of healthcare.

SAPBERT: Developed by SAP, this BERT model is fine-tuned for the enterprise domain, making it relevant for tasks in business and corporate contexts.

CamemBERT: A version of BERT specifically designed for French text, CamemBERT is pre-trained on a large corpus of French language data.

Multilingual BERT (mBERT): mBERT is pre-trained on text in multiple languages. It’s a valuable tool for cross-lingual applications, allowing it to understand and generate text in various languages.

Some examples of GPT-based models:

GPT-3 (Generative Pre-trained Transformer 3): Developed by OpenAI, GPT-3 is one of the most famous models. It’s known for its large scale, with 175 billion parameters, and its ability to perform a wide range of natural language processing tasks, from text generation to translation and question-answering.

GPT-2 (Generative Pre-trained Transformer 2): GPT-2 preceded GPT-3 and was remarkable for its text generation capabilities. It also has multiple variations with different sizes for various use cases.

GPT-2.5: This is an intermediate version between GPT-2 and GPT-3, offering a balance between model size and performance.

GPT-J: Developed by EleutherAI, GPT-J is an open-source model that aims to match GPT-3’s scale. It’s notable for its high-quality text generation and ability to perform various NLP tasks.

Turing-NLG: Developed by Microsoft, Turing-NLG is a model designed for natural language generation. It’s useful for tasks like text completion, summarization, and question-answering.

Megatron-Turing-NLG: A collaboration between NVIDIA and Microsoft, this model combines the large-scale Megatron model with Turing-NLG for even more powerful language generation capabilities.

ChatGPT: Another creation from OpenAI, ChatGPT is specifically fine-tuned for interactive conversations and chatbot applications. It’s designed for real-time, context-aware responses.

CodeGPT: Also from OpenAI, CodeGPT is tailored for generating and understanding code in multiple programming languages. It’s designed to assist developers in writing code and solving coding-related problems.

KoGPT (Korean GPT): Developed by SK Telecom, KoGPT is designed for the Korean language. It’s capable of understanding and generating text in Korean, making it valuable for applications in South Korea.

DeBERTa: This model, developed by Microsoft Research, enhances pre-training techniques, focusing on more extensive context modelling. It’s known for improved performance on various NLP benchmarks.

Transform Your Life with Rise&Inspire – Be part of our community, where uplifting vibes pave the way to success.

Discover more from Rise & Inspire

Subscribe to get the latest posts sent to your email.

Thanks for sharing , very informative.

🤝👍