What Kind of AI Practitioner Do You Want to Become?

Can you master Generative AI through self-directed learning and prompt engineering alone? Discover the hidden gaps in chatbot-based learning and why true AI mastery demands more than clever prompting.

Can You Master Generative AI Just by Chatting with ChatGPT and Claude?

The truth about self-directed AI learning and the hidden gaps that could derail your progress

In a world where artificial intelligence evolves by the minute, many aspiring learners and creators find themselves asking a compelling question: Can I master Generative AI simply by chatting with tools like ChatGPT or Claude and experimenting on my own?

The short answer is: Yes, partially—but not entirely.

While experimentation and hands-on practice with AI tools can take you surprisingly far, there’s another side to this story that many self-taught AI enthusiasts discover only when they hit their first major roadblock.

The Missing Piece: What Chatting with AI Can’t Teach You

Theoretical Foundation Gaps

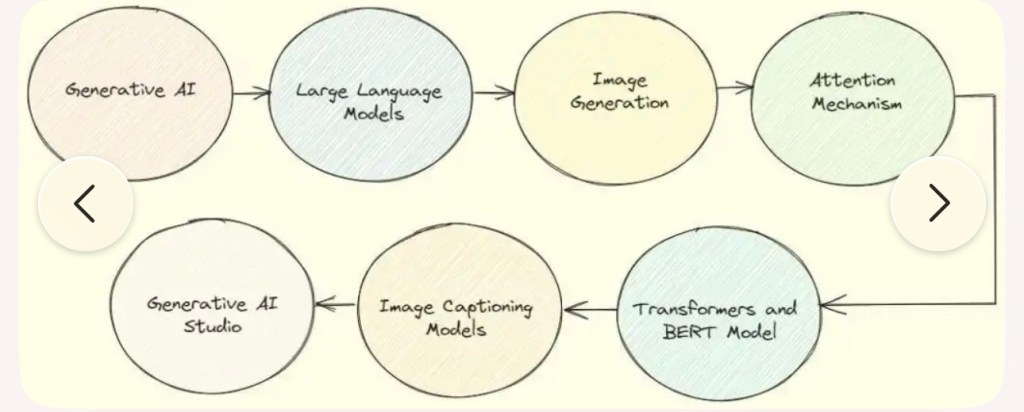

While chatting with AI tools gives you practical experience, you’ll miss the underlying mathematical and computational principles that drive these systems. Understanding concepts like transformer architectures, attention mechanisms, gradient descent, and neural network fundamentals becomes crucial when you need to troubleshoot, optimize, or innovate beyond basic use cases.

Without this foundation, you’re essentially driving a car without understanding how the engine works—fine for routine trips, but limiting when you need to diagnose problems or push performance boundaries.

Systematic Learning Structure

Self-directed experimentation often leads to scattered, incomplete knowledge. You might become proficient at prompt engineering for creative writing but remain unaware of crucial applications in data analysis, code generation, or business process automation. A structured curriculum ensures comprehensive coverage of the field, from preprocessing techniques to model evaluation metrics, deployment strategies, and ethical considerations.

Industry Standards and Best Practices

Professional AI development involves rigorous methodologies that casual experimentation rarely exposes you to. This includes:

• Version control for models

• A/B testing frameworks

• Bias detection and mitigation

• Scalability considerations

• Regulatory compliance

These aren’t just theoretical concepts—they’re essential for anyone working with AI in professional settings.

Hands-on Technical Implementation

While chatting with AI tools teaches you to be a sophisticated user, it doesn’t teach you to build, train, or fine-tune models yourself. Understanding how to work with datasets, implement custom architectures, or integrate AI capabilities into applications requires direct coding experience with frameworks like TensorFlow, PyTorch, or Hugging Face Transformers.

Critical Evaluation Skills

Perhaps most importantly, without formal education or structured learning, you may struggle to critically evaluate AI outputs, understand their limitations, or recognize when results are unreliable. This analytical skill is essential for responsible AI use and development.

But What If You’re Already a Prompt Engineering Master?

Here’s where things get interesting. If you can truly design prompts to make AI do “any kind of work,” then the formal/theoretical side becomes less essential for many practical purposes—but it creates a different set of critical limitations.

The Power of Advanced Prompting

Sophisticated prompt engineering can indeed unlock remarkable capabilities. You can orchestrate complex workflows, break down intricate problems, guide reasoning processes, and even simulate specialized expertise across domains. Many successful AI practitioners today are essentially “prompt architects” who achieve impressive results without deep technical knowledge.

Where Prompting Hits Its Ceiling

However, several fundamental barriers emerge that prompting alone cannot overcome:

Performance and Cost Optimization: No amount of clever prompting can solve the economic reality of API costs at scale, or the latency issues when you need real-time responses. You’ll eventually need to understand model selection, fine-tuning, or local deployment to make solutions economically viable.

Proprietary and Sensitive Applications: Many organizations cannot send their data to external AI services due to privacy, security, or competitive concerns. Prompting skills become irrelevant if you can’t access the tools in the first place.

Reliability and Consistency: Prompting can achieve impressive one-off results, but building systems that work reliably across thousands of varied inputs requires understanding failure modes, implementing fallback strategies, and creating robust evaluation frameworks.

Innovation Beyond Existing Capabilities: While prompting leverages existing AI capabilities creatively, it doesn’t create new capabilities. Breaking new ground requires understanding how to train models on custom data, modify architectures, or combine different AI approaches.

The Dependency Fragility Risk

Your entire skillset becomes dependent on the continued availability and consistency of specific AI services. This creates a vulnerability similar to internet dependency—but with unique characteristics.

Realistic Disruption Scenarios

Rather than complete unavailability, you’re more likely to face:

• Economic Barriers: API costs escalating dramatically

• Access Restrictions: Geopolitical tensions or regulatory limitations

• Service Fragmentation: AI landscape splitting into incompatible ecosystems

• Quality Degradation: Models becoming less capable due to various constraints

Technical Knowledge as Insurance

Understanding how to run open-source models locally, fine-tune smaller models, build hybrid systems, and create fallback mechanisms becomes your safety net when external AI services become limited or unreliable.

The Optimal Learning Strategy

The sweet spot lies in combining both approaches:

1. Use AI tools for hands-on experimentation to build practical skills and intuition

2. Simultaneously build theoretical knowledge through courses, research papers, and systematic practice

3. Develop technical implementation skills to maintain independence and flexibility

4. Practice critical evaluation to become a responsible AI practitioner

Conclusion

Can you master Generative AI just by chatting with AI tools? You can certainly become proficient and accomplish remarkable things. But true mastery—the kind that creates lasting value, enables innovation, and provides resilience against changing technological landscapes—requires a more comprehensive approach.

The question isn’t whether you need formal education or technical depth. The question is: What kind of AI practitioner do you want to become?

If you’re content operating within existing boundaries, advanced prompting skills may suffice. But if you aspire to push those boundaries, solve novel problems, or build sustainable AI solutions, then the “other side” of AI learning becomes not just helpful—but essential.

Ready to dive deeper into AI learning? Start by identifying which skills you want to develop and create a balanced learning plan that combines hands-on experimentation with systematic knowledge building.

COMPREHENSIVE CURRICULUM: DATA ANALYSIS, CODE GENERATION & BUSINESS PROCESS AUTOMATION

Course Overview

Duration: 16 weeks (4 months intensive) or 32 weeks (8 months part-time)

Prerequisites: Basic programming knowledge, statistics fundamentals

Target Audience: Data professionals, software developers, business analysts, automation specialists

Module 1: Foundations and Environment Setup (Week 1-2)

Learning Objectives

• Establish development environments for data analysis and automation

• Understand the interconnected nature of data analysis, code generation, and process automation

• Master version control and collaborative development practices

Topics Covered

• Development Environment Setup

• Python ecosystem (Anaconda, Jupyter, VS Code)

• R environment (RStudio, packages)

• Database connections (SQL, NoSQL)

• Cloud platforms (AWS, Azure, GCP basics)

• Version Control & Collaboration

• Git fundamentals and workflows

• Documentation standards

• Code review processes

• Project structure best practices

• Data Ecosystem Overview

• Data pipeline architecture

• ETL vs ELT paradigms

• Batch vs streaming processing

• Data governance principles

Practical Exercises

• Set up complete development environment

• Create first data pipeline project structure

• Implement basic version control workflow

Module 2: Data Preprocessing and Quality Management (Week 3-4)

Learning Objectives

• Master data cleaning and transformation techniques

• Implement robust data quality frameworks

• Handle missing data and outliers effectively

Topics Covered

• Data Quality Assessment

• Data profiling techniques

• Quality metrics and KPIs

• Automated quality checks

• Data lineage tracking

• Data Cleaning Techniques

• Missing value handling strategies

• Outlier detection and treatment

• Data type conversions

• Text preprocessing (NLP applications)

• Data Transformation

• Feature engineering fundamentals

• Scaling and normalization

• Categorical encoding methods

• Time series preprocessing

• Advanced Preprocessing

• Handling imbalanced datasets

• Feature selection techniques

• Dimensionality reduction

• Data augmentation strategies

Practical Exercises

• Build automated data quality pipeline

• Implement comprehensive preprocessing library

• Create data profiling dashboard

Module 3: Exploratory Data Analysis and Visualization (Week 5-6)

Learning Objectives

• Develop systematic EDA methodologies

• Create effective data visualizations

• Build interactive dashboards and reports

Topics Covered

• Statistical Analysis Foundations

• Descriptive statistics

• Distribution analysis

• Correlation and association measures

• Hypothesis testing in EDA context

• Visualization Techniques

• Static visualizations (matplotlib, seaborn, ggplot)

• Interactive visualizations (Plotly, Bokeh)

• Geospatial visualization

• Network and graph visualization

• Dashboard Development

• Streamlit applications

• Dash frameworks

• Tableau/Power BI integration

• Real-time dashboard creation

• Advanced EDA Techniques

• Automated EDA tools

• Storytelling with data

• A/B testing visualization

• Cohort analysis

Practical Exercises

• Complete EDA project with business insights

• Build interactive dashboard

• Create automated EDA pipeline

Module 4: Statistical Analysis and Machine Learning (Week 7-10)

Learning Objectives

• Apply appropriate statistical methods for business problems

• Build and evaluate machine learning models

• Understand model selection and validation techniques

Topics Covered

• Statistical Modeling

• Linear and logistic regression

• Time series analysis and forecasting

• Survival analysis

• Bayesian methods

• Machine Learning Fundamentals

• Supervised learning algorithms

• Unsupervised learning techniques

• Ensemble methods

• Deep learning basics

• Model Development Process

• Problem formulation

• Feature engineering for ML

• Model selection strategies

• Cross-validation techniques

• Advanced ML Topics

• AutoML frameworks

• Model interpretability (SHAP, LIME)

• Handling concept drift

• Multi-modal learning

Practical Exercises

• Build end-to-end ML pipeline

• Implement model comparison framework

• Create interpretable ML solution

Module 5: Model Evaluation and Performance Metrics (Week 11-12)

Learning Objectives

• Master comprehensive model evaluation techniques

• Implement appropriate metrics for different problem types

• Develop model monitoring and maintenance strategies

Topics Covered

• Evaluation Metrics

• Classification metrics (accuracy, precision, recall, F1, AUC-ROC)

• Regression metrics (MAE, MSE, MAPE, R²)

• Ranking and recommendation metrics

• Custom business metrics

• Model Validation Techniques

• Cross-validation strategies

• Time series validation

• Stratified sampling

• Bootstrap methods

• Performance Analysis

• Bias-variance tradeoff

• Learning curves

• Confusion matrix analysis

• Error analysis techniques

• Model Monitoring

• Performance drift detection

• Data drift monitoring

• A/B testing for models

• Continuous evaluation pipelines

Practical Exercises

• Build comprehensive model evaluation framework

• Implement automated monitoring system

• Create performance reporting dashboard

Module 6: Code Generation and Automation (Week 13-14)

Learning Objectives

• Develop automated code generation systems

• Implement template-based and AI-assisted coding

• Build reusable automation frameworks

Topics Covered

• Code Generation Techniques

• Template-based generation

• Abstract Syntax Tree (AST) manipulation

• Domain-specific languages (DSL)

• AI-assisted code generation

• Automation Frameworks

• Task scheduling (Airflow, Luigi)

• Workflow orchestration

• Event-driven automation

• Serverless automation

• Code Quality and Testing

• Automated testing frameworks

• Code quality metrics

• Continuous integration/deployment

• Documentation generation

• Advanced Automation

• Self-healing systems

• Adaptive automation

• Natural language to code

• Low-code/no-code platforms

Practical Exercises

• Build code generation tool

• Implement automated workflow system

• Create self-documenting pipeline

Module 7: Business Process Automation (Week 15-16)

Learning Objectives

• Design and implement end-to-end business process automation

• Integrate multiple systems and data sources

• Optimize processes for efficiency and reliability

Topics Covered

• Process Analysis and Design

• Business process mapping

• Bottleneck identification

• ROI analysis for automation

• Change management strategies

• Integration Technologies

• API development and integration

• Message queues and streaming

• Database integration patterns

• Legacy system integration

• Robotic Process Automation (RPA)

• RPA tools and frameworks

• UI automation techniques

• Exception handling in RPA

• RPA governance and security

• Enterprise Automation

• Workflow engines

• Business rule engines

• Process mining

• Digital twin concepts

Practical Exercises

• Design complete business process automation

• Implement multi-system integration

• Build process monitoring dashboard

Module 8: Deployment and Production Strategies (Week 17-18)

Learning Objectives

• Deploy models and automation systems to production

• Implement scalable and reliable deployment architectures

• Manage production systems effectively

Topics Covered

• Deployment Architectures

• Containerization (Docker, Kubernetes)

• Microservices architecture

• Serverless deployment

• Edge computing deployment

• MLOps and DevOps

• CI/CD pipelines for ML

• Model versioning and registry

• Infrastructure as code

• Monitoring and alerting

• Scalability and Performance

• Load balancing strategies

• Caching mechanisms

• Database optimization

• Performance testing

• Production Best Practices

• Error handling and recovery

• Logging and observability

• Security considerations

• Disaster recovery planning

Practical Exercises

• Deploy ML model to production

• Implement complete MLOps pipeline

• Create scalable automation system

Module 9: Ethical Considerations and Responsible AI (Week 19-20)

Learning Objectives

• Understand ethical implications of automated systems

• Implement bias detection and mitigation strategies

• Develop responsible AI governance frameworks

Topics Covered

• AI Ethics Fundamentals

• Fairness and bias in algorithms

• Transparency and explainability

• Privacy and data protection

• Accountability in automated systems

• Bias Detection and Mitigation

• Statistical bias measures

• Fairness metrics

• Debiasing techniques

• Inclusive dataset creation

• Privacy and Security

• Differential privacy

• Federated learning

• Secure multi-party computation

• GDPR and compliance considerations

• Governance and Policy

• AI governance frameworks

• Risk assessment methodologies

• Stakeholder engagement

• Regulatory compliance

Practical Exercises

• Conduct bias audit on existing model

• Implement fairness constraints

• Create AI governance framework

Capstone Project (Week 21-24)

Project Requirements

Students must complete a comprehensive project incorporating elements from all modules:

1. Data Pipeline: Build end-to-end data processing pipeline

2. Analysis Component: Perform thorough analysis with insights

3. ML/Automation: Implement machine learning or process automation

4. Deployment: Deploy solution to production environment

5. Monitoring: Implement monitoring and maintenance procedures

6. Ethics Review: Conduct ethical assessment of solution

Deliverables

• Working system/application

• Technical documentation

• Business impact analysis

• Ethical considerations report

• Presentation to stakeholders

Assessment Strategy

Continuous Assessment (60%)

• Weekly assignments and quizzes

• Practical exercises and mini-projects

• Peer code reviews

• Discussion forum participation

Module Projects (25%)

• End-of-module practical projects

• Integration of multiple concepts

• Real-world problem solving

Capstone Project (15%)

• Comprehensive final project

• Demonstration of all learning objectives

• Professional presentation

Resources and Tools

Primary Technologies

• Programming: Python, R, SQL

• Data Processing: Pandas, NumPy, Apache Spark

• Machine Learning: Scikit-learn, TensorFlow, PyTorch

• Visualization: Matplotlib, Plotly, Tableau

• Deployment: Docker, Kubernetes, AWS/Azure/GCP

• Automation: Apache Airflow, Selenium, UiPath

Learning Resources

• Interactive coding platforms

• Case study databases

• Industry datasets

• Guest expert sessions

• Open source project contributions

Support Systems

• Dedicated mentorship program

• Peer learning groups

• Office hours with instructors

• Industry project partnerships

Career Pathways

Immediate Opportunities

• Data Analyst

• Business Intelligence Developer

• Process Automation Specialist

• ML Engineer

• Data Scientist

Advanced Career Tracks

• Chief Data Officer

• AI/ML Architect

• Business Process Consultant

• Technical Product Manager

• Research Scientist

Continuing Education

Advanced Specializations

• Deep Learning and Neural Networks

• Natural Language Processing

• Computer Vision

• Reinforcement Learning

• Quantum Computing Applications

Industry Certifications

• Cloud platform certifications

• Data science certifications

• Process automation certifications

• Ethics and governance certifications

This curriculum provides a comprehensive foundation while remaining flexible enough to adapt to specific industry needs and emerging technologies.

Explore additional inspiration from the blog’s archive. | Tech Insights

Categories: Astrology & Numerology | Daily Prompts | Law | Motivational Blogs | Motivational Quotes | Personal Development | Tech Insights | Wake-Up Calls

🌐 Home | Blog | About Us | Contact| Resources

📱 Follow us: @RiseNinspireHub

© 2025 Rise&Inspire. All Rights Reserved.

Word Count:2304