The central idea of this blog post is:

Not all AI models handle vague or unclear prompts the same way—and choosing the right one depends on whether you prioritize speed, creativity, accuracy, or safety.

One-Line Essence

Choose your AI model based on how you handle uncertainty: speed and bold assumptions, or caution and precision.

Real work is rarely perfectly structured. You brainstorm. You explore territory you don’t fully understand. You write prompts that are half-formed because the direction isn’t yet defined. When you’re in that mode, you don’t want an AI that asks for clarification. You want one that makes sensible assumptions and delivers something immediately usable. But what you gain in speed, you might lose in accuracy.

Here’s the trade-off breakdown.

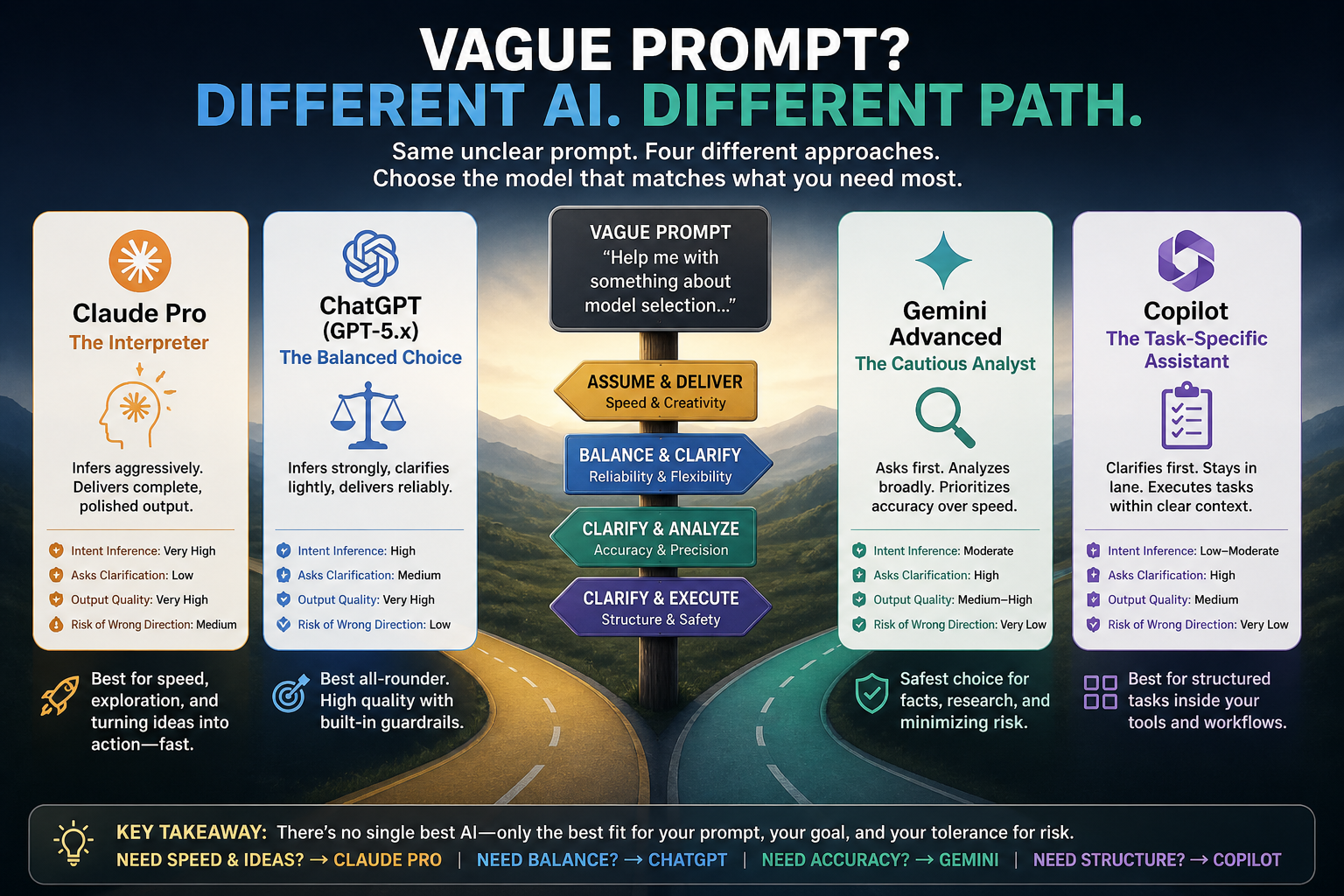

A Decision-Grade Comparison: Claude Pro, ChatGPT, Gemini Advanced, and Copilot

When Your Prompt Is Unclear, Which Model Delivers the Best Output?

Introduction: The Vague Prompt Problem

We’ve all written unclear prompts. Maybe you know what you want but can’t articulate it. Maybe you’re exploring an idea and the direction isn’t yet defined. Maybe the context is too complex to express in a single paragraph.

The question isn’t whether the prompt is poorly written. The question is: which AI model will infer your intent correctly, make sensible assumptions, and deliver usable output without asking for clarification?

This matters. In real work—writing, brainstorming, coding, analysis—you don’t always have time to structure your request perfectly. Some models will confidently deliver incomplete answers. Others will ask clarifying questions. And some will give you something genuinely useful immediately.

How We Evaluate: The Framework

We tested four major models under one core condition: a deliberately vague, underspecified prompt that requires inference, assumption-making, and intent reconstruction.

We measured four dimensions:

Intent Inference: How well does the model guess what you actually wanted?

Willingness to Assume: Does it make reasonable assumptions, or does it ask for clarification?

Output Quality (Vague Prompt): Is the output immediately usable, or does it feel generic and incomplete?

Risk of Wrong Direction: How likely is it that the model’s assumptions took you somewhere you didn’t intend?

The Comparison at a Glance

| Model | Assumes Intent | Asks Clarification | Output Quality | Risk |

| Claude Pro | Very High | Low | Very High | Medium |

| ChatGPT (GPT-5.x) | High (Balanced) | Medium | Very High | Low |

| Gemini Advanced | Moderate | High | Medium–High | Very Low |

| Copilot | Low–Moderate | High | Medium | Very Low |

Model-by-Model Analysis

1. Claude Pro—The Interpreter

If you had to choose one model to handle unclear prompts, Claude Pro is your answer.

What Claude Does

• Aggressively reconstructs your intent from minimal information

• Produces fully structured, polished, immediately usable output

• Rarely blocks on missing detail or asks clarifying questions

• Fills gaps with reasonable assumptions automatically

The Strength

Claude excels at turning vague, half-formed ideas into complete, professional output. You ask for help with ‘something about model selection,’ and you get a structured analysis. This is exceptionally useful when you’re exploring territory you don’t fully understand.

The Limitation

Claude’s confidence can work against you. If your intent were actually different from what Claude assumed, it would deliver a highly polished wrong answer—which can be harder to correct than a generic placeholder. You may need to actively challenge Claude’s assumptions rather than accepting them.

Bottom Line

Highest productivity when prompts are weak. Highest risk of confident wrong direction.

2. ChatGPT (GPT-5.x)—The Balanced Choice

ChatGPT represents the middle ground: strong inference with controlled assumption-making.

What ChatGPT Does

• Infers intent, but checks boundaries more carefully than Claude

• Often delivers a strong answer AND lightly clarifies assumptions

• Combines high reasoning with structural reliability

• Sometimes adds conditional branching: ‘If you meant X, here’s that approach…’

The Strength

ChatGPT gives you high-quality, usable output without making you second-guess the assumptions. It’s like having a colleague who understands what you probably meant but isn’t afraid to clarify the boundaries. This makes it reliable across a wider range of use cases.

The Behavior with Vague Prompts

You get immediate output. The output is structured and professional. And you also get a subtle acknowledgment of the ambiguity: ‘Based on what you’ve described, here’s my interpretation…’ This allows you to course-correct if needed, but you’re not blocked waiting for clarification.

Bottom Line

Most consistent, most reliable, safest bet for unclear prompts while maintaining high productivity.

3. Gemini Advanced—The Cautious Analyst

Gemini prioritizes precision over interpretation.

What Gemini Does

• Hesitates to assume when ambiguity is high

• Often asks clarifying questions rather than inferring

• Provides broad, general answers when intent is unclear

• Prioritizes factual grounding over creative interpretation

The Strength

Gemini minimises the risk of hallucination and confident wrong answers. If you have a fact-based question or need research-grade output, Gemini’s conservative approach is an asset. You’re less likely to be led astray.

The Limitation

The output can feel generic, less tailored, and sometimes feels incomplete. For exploratory or creative work—where your prompt is inherently vague—Gemini’s caution becomes a bottleneck. You end up needing to ask clarifying questions yourself rather than getting immediate usable output.

Bottom Line

Safer, but less useful when prompts are weak. Better for fact-checking than for exploration.

4. Copilot—The Task-Specific Assistant

Copilot is designed around structured productivity tools (Word, Excel, coding environments).

What Copilot Does

• Typically asks for clarification before proceeding

• Stays within narrow, task-specific interpretation

• Works best when there’s clear context (a Word document, a code file, a spreadsheet)

• Conservative by default

The Strength

In structured workflows—editing a document, writing code, managing a spreadsheet—Copilot is reliable. It understands context from the environment and doesn’t pretend to know what you meant when it doesn’t. This is exactly what you want when you’re working within defined tools.

The Limitation

Copilot is weak at open-ended, vague prompts without environmental context. If you’re brainstorming, exploring ideas, or asking something abstract, Copilot will often ask for more information rather than making reasonable leaps. For exploratory AI work, it’s the least capable of the four.

Bottom Line

Most effective in structured environments. Least effective in ambiguity-heavy scenarios.

Final Ranking: Which Model Wins?

For unclear, vague, or underspecified prompts:

🥇 #1: Claude Pro

Strength: Best at assumption, expansion, and producing complete answers immediately

Trade-off: Highest productivity, highest risk

🥈 #2: ChatGPT (GPT-5.x)

Strength: Best balance of inference, correctness, and controlled assumptions

Trade-off: Most reliable overall

🥉 #3: Gemini Advanced

Strength: Best for cautious, fact-based responses, but needs clearer prompts

Trade-off: Safest, but less useful in ambiguity

4️⃣ #4: Copilot

Strength: Best in structured workflows, weakest in open ambiguity

Trade-off: Most limited for exploratory work

The Deeper Insight: Two Different AI Philosophies

The differences between these models reflect two competing philosophies about how AI should behave when facing ambiguity.

Philosophy 1: ‘Assume and Deliver’ (Claude)

Claude’s approach: Treat the user’s half-formed idea as a complete request. Infer intent aggressively. Deliver immediately usable output. The user will correct you if needed.

Advantage: High productivity. You never wait for clarification.

Disadvantage: You might confidently go the wrong direction.

Philosophy 2: ‘Clarify and Constrain’ (Gemini, Copilot)

Gemini and Copilot’s approach: When ambiguity is high, ask clarifying questions. Don’t assume. Deliver only what you’re confident about. The user will provide more detail if needed.

Advantage: Lower risk of wrong answers. Safer operation.

Disadvantage: Lower immediacy. You need to do clarification work yourself.

Philosophy 3: ‘Balanced Reasoning’ (ChatGPT)

ChatGPT’s approach: Infer intent and deliver immediately usable output, but acknowledge the boundaries of that inference. Give you the answer AND a light clarification of assumptions.

Advantage: Combines productivity with reliability.

Disadvantage: Less polished than Claude, less cautious than Gemini (middle ground).

Which Model Should You Choose?

The answer depends on what you value:

If You Write Vague, Intuitive Prompts Often

→ Choose Claude Pro

You get complete answers immediately. Claude’s assumption-making is a feature, not a bug.

If You Want High-Quality Output Without Risking Wrong Assumptions

→ Choose ChatGPT

You get the best balance. Strong output, clear reasoning, controlled assumptions. Safe and reliable across most use cases.

If You’re Doing Fact-Based, Research-Heavy Work

→ Choose Gemini Advanced

Gemini’s caution is an asset here. You’re less likely to be misled.

If You’re Working Within Structured Tools (Word, Excel, Code)

→ Choose Copilot

Copilot understands tool-specific context and works reliably in those environments.

Conclusion: Your Vague Prompt Deserves the Right Model

The worst place to use the wrong AI model is when your prompt is vague. That’s exactly when you need the model’s inference capabilities, assumption-making, and confidence. You can’t afford caution or generic answers.

If you’re exploring ideas, writing, analyzing complex topics, or working through something you don’t yet fully understand—Claude Pro is your best bet. It will turn your half-formed thoughts into usable output. Just be prepared to challenge its assumptions if needed.

If you want a safer, more reliable general-purpose choice—ChatGPT is the sensible middle ground. You get strong output without the risk of confident wrong direction.

And if you’re in a specialized context—fact-checking, structured tool use, or open research—Gemini and Copilot serve those needs well. Just don’t expect them to shine on vague, exploratory prompts.

Which of these AI philosophies matches your actual workflow: do you need Claude’s confidence and immediate polish, or do you prefer ChatGPT’s balance of inference and caution? Share your experience in the comments below.

Insights like these arrive in your inbox weekly. Join our community of readers exploring AI, technology, and productivity in ways that actually matter. Subscribe to Rise and Inspire and never miss a framework that changes how you work.

Strive to elevate in life.

K. John Britto Kurusumuthu

Series: Tech Insights – Rise & Inspire

© 2026 Rise & Inspire. All rights reserved.

Visit Rise&Inspire to explore more on faith, law, technology, and the pursuit of purposeful living.

© 2026 Rise & Inspire. Follow our journey of reflection, renewal, and relevance.

Website: Home | Blog | About Us | Contact| Resources

Word Count:1579