How Do RAG and Agentic AI Transform Modern Business Intelligence?

Discover how Retrieval-Augmented Generation (RAG) and agentic AI are revolutionizing business intelligence. Learn the key differences, benefits, and how they work together to create smarter AI systems.

What’s the Difference Between RAG and Agentic AI? A Complete Guide

The artificial intelligence landscape is rapidly evolving, with two groundbreaking approaches leading the charge: Retrieval-Augmented Generation (RAG) and agentic AI. While both technologies promise to revolutionize how businesses interact with information and automate processes, they solve fundamentally different problems and offer unique advantages.

Understanding these technologies isn’t just academic—it’s essential for business leaders, developers, and organizations looking to harness AI’s full potential. Whether you’re considering implementing AI solutions or simply want to understand where the field is heading, this comprehensive guide will break down everything you need to know.

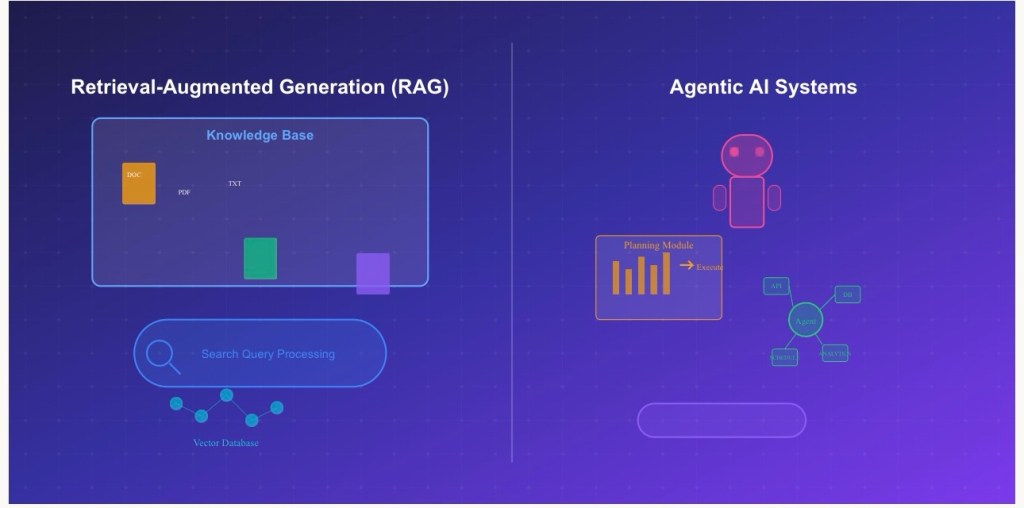

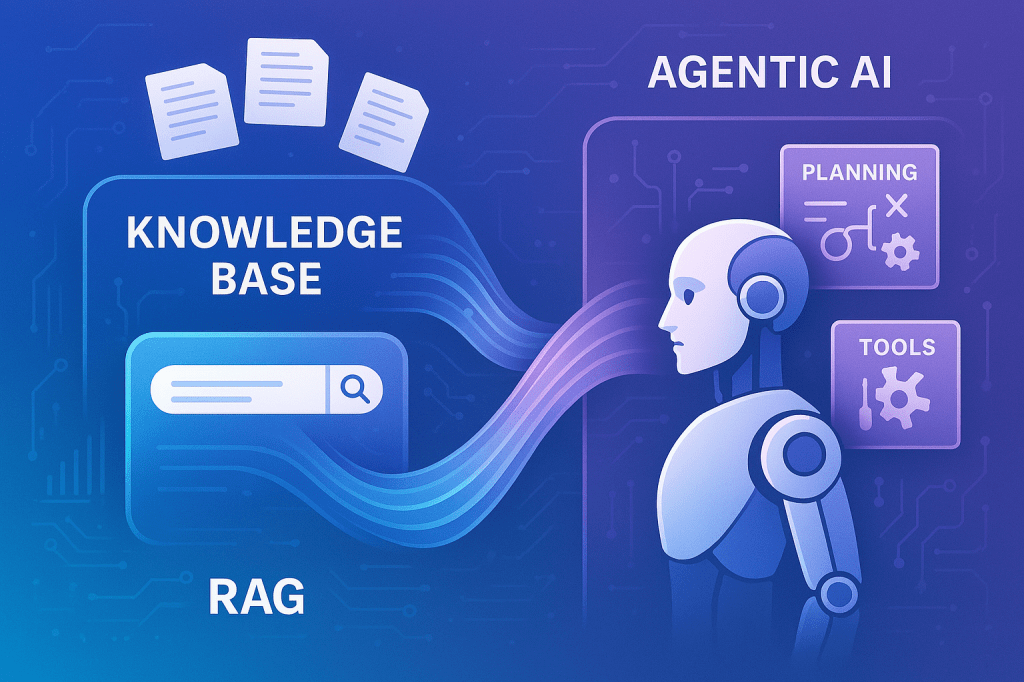

Understanding Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation represents a paradigm shift in how AI systems access and use information. Traditional language models are limited by their training data—they can only work with information they learned during their initial training phase. RAG changes this by creating a bridge between AI models and external knowledge sources.

How RAG Works in Practice

The RAG process unfolds in several coordinated steps. When you ask a question, the system first converts your query into a searchable format. It then scours external databases, documents, or knowledge repositories to find relevant information. This retrieved content becomes the foundation for the AI’s response, ensuring answers are grounded in current, verifiable sources rather than potentially outdated training data.

Think of RAG as giving an AI system access to a vast, constantly updated library. Instead of relying solely on what it memorized during training, the AI can now look up current information, cross-reference sources, and provide responses based on the latest available data.

The Business Impact of RAG

Organizations implementing RAG systems report significant improvements in information accuracy and relevance. Customer service departments use RAG to access real-time product information, policy updates, and troubleshooting guides. Research teams leverage RAG to stay current with the latest publications and findings in their fields.

The technology particularly excels in environments where information changes frequently. Legal firms use RAG systems to access the most recent case law and regulations. Healthcare organizations implement RAG to ensure medical recommendations reflect the latest research and treatment protocols.

Exploring Agentic AI Systems

Agentic AI represents a fundamental shift from reactive to proactive artificial intelligence. These systems don’t just respond to prompts—they exhibit goal-directed behavior, make autonomous decisions, and execute complex workflows without constant human intervention.

The Components of Agency

Successful agentic AI systems incorporate several critical capabilities. Planning allows these systems to break down complex objectives into manageable steps, creating roadmaps for achieving specific goals. Memory systems maintain context across interactions, enabling the AI to learn from previous experiences and build upon past decisions.

Tool integration capabilities enable agentic AI to interact with external software, databases, and APIs. This means an agentic system might automatically update spreadsheets, send emails, schedule meetings, or trigger business processes based on its analysis and decision-making.

Self-reflection mechanisms allow these systems to evaluate their own performance, identify areas for improvement, and adjust their strategies accordingly. This creates a feedback loop that enables continuous improvement without human intervention.

Real-World Applications of Agentic AI

Modern businesses are deploying agentic AI across various functions. Marketing departments use agentic systems to manage entire campaign lifecycles—from audience research and content creation to performance monitoring and optimization. Supply chain management benefits from agentic AI that can predict demand, optimize inventory levels, and automatically adjust procurement schedules.

In financial services, agentic AI systems monitor market conditions, execute trades based on predetermined strategies, and adjust portfolios in real-time. These systems can process vast amounts of data, identify patterns, and make decisions far faster than human analysts.

The Synergy Between RAG and Agentic AI

The most powerful AI implementations often combine RAG and agentic capabilities, creating systems that are both well-informed and autonomous. This combination addresses the limitations of each approach when used in isolation.

Enhanced Decision-Making Through Information Access

An agentic AI system equipped with RAG capabilities can make more informed decisions by accessing current information during its planning and execution phases. For example, an agentic project management system might use RAG to retrieve the latest project specifications, team availability, and resource constraints before creating and executing project plans.

This combination is particularly powerful in dynamic environments where conditions change rapidly. An agentic trading system with RAG capabilities can access real-time market news, economic indicators, and analyst reports to inform its decision-making process, adapting strategies based on the most current information available.

Continuous Learning and Adaptation

RAG-enabled agentic systems can continuously update their knowledge base, ensuring their decision-making remains relevant and accurate. This creates AI systems that don’t just execute predefined workflows but adapt and improve their performance based on new information and changing circumstances.

Implementation Considerations for Businesses

Successfully implementing these technologies requires careful planning and consideration of organizational needs. RAG systems require robust knowledge management infrastructure, including well-organized document repositories and efficient search capabilities. Organizations must also consider data governance, ensuring that retrieved information is accurate, current, and appropriately secured.

Agentic AI implementation demands clear goal definition and boundary setting. Organizations must determine the level of autonomy they’re comfortable granting to AI systems and establish monitoring mechanisms to ensure systems operate within acceptable parameters.

Security and Governance Challenges

Both RAG and agentic AI introduce unique security considerations. RAG systems must securely access and process potentially sensitive information from various sources. Agentic systems require careful permission management to prevent unauthorized actions or access to restricted resources.

Organizations implementing these technologies must establish comprehensive governance frameworks that balance innovation with risk management. This includes regular auditing of AI decisions, maintaining human oversight capabilities, and ensuring compliance with relevant regulations and industry standards.

The Future Landscape

The convergence of RAG and agentic AI technologies points toward a future where AI systems are both highly knowledgeable and autonomously capable. These hybrid systems will likely become the standard for enterprise AI implementations, offering the best of both worlds: access to current, accurate information and the ability to act on that information intelligently.

As these technologies mature, we can expect to see more sophisticated integration patterns, improved user interfaces for managing AI agents, and enhanced security frameworks for governing autonomous AI operations. The organizations that begin exploring and implementing these technologies today will be best positioned to capitalize on their full potential as they continue to evolve.

The question isn’t whether RAG and agentic AI will transform business operations—it’s how quickly organizations can adapt to leverage these powerful capabilities. The time to start exploring and implementing these technologies is now, as they represent fundamental shifts in how we think about AI’s role in business and society.

Comprehensive Overview: LLMs and RAG Integration (2025)

Retrieval-Augmented Generation (RAG) is primarily an architectural pattern rather than a built-in feature of specific language models. Most modern LLMs can be configured to operate within a RAG pipeline, with retrieval components and vector databases integrated at the application level.

Major LLM Providers Supporting RAG Integration

- GPT-4 family (GPT-4, GPT-4 Turbo, GPT-4o)

- GPT-3.5 Turbo

- GPT-4.1 with enhanced context handling suitable for RAG

- Claude 4 family (Opus, Sonnet)

- Claude 3.5 family (Opus, Sonnet, Haiku)

- Claude 3 family (all variants)

- Gemini 2.5 Pro

- Gemini 1.5 Pro and Flash

- PaLM 2

- Vertex AI foundation models

Meta

- LLaMA 2 (7B, 13B, 70B)

- LLaMA 3 (8B, 70B)

- Code Llama for code-specific RAG applications

- Mistral 7B

- Mixtral 8x7B

- Mistral Large

- Codestral

Other Notable Providers

- Cohere – Command models optimized for retrieval

- AI21 Labs – Jurassic models

- Hugging Face Transformers – Open-source model hub

- xAI – Grok models (limited open access)

- DeepSeek – Multimodal and multilingual LLMs

- Alibaba Qwen – Open-source foundation models

Enterprise and Cloud-Based RAG Solutions

- Azure OpenAI Service with built-in RAG integration

- Azure Cognitive Search

- Microsoft Copilot (leveraging RAG for enterprise productivity)

- Amazon Bedrock – Unified access to LLMs with RAG potential

- Amazon Kendra – Intelligent enterprise search

- SageMaker JumpStart – Pretrained models and RAG deployment templates

- Vertex AI Search and Conversation

- Enterprise search with LLM integration

- AI features in Google Workspace using RAG principles

Open-Source RAG Frameworks and Tools

RAG-Oriented Frameworks

- LangChain – Modular LLM orchestration

- LlamaIndex – Data-centric RAG pipelines

- Haystack – Scalable RAG framework for production

Vector Databases and Tooling

- ChromaDB – Lightweight vector store

- Pinecone – Fully managed vector database

- Weaviate – Open-source vector search engine

- Qdrant – High-performance vector similarity engine

Specialized RAG-Optimized Models

These models are specifically designed or fine-tuned for retrieval use cases:

- RAG-Token and RAG-Sequence – Developed by Facebook AI

- FiD (Fusion-in-Decoder) – Retrieval-aware generation

- DPR (Dense Passage Retrieval) – Effective retriever backbone

- ColBERT – Efficient and expressive retrieval

- BGE models – Developed by the Beijing Academy of AI for retrieval scenarios

Industry-Specific RAG Implementations

Legal

- Case law retrieval assistants

- Legal contract summarization and analysis tools

Healthcare

- Clinical decision support from medical research literature

- Symptom-to-diagnosis inference using medical knowledge bases

Finance

- RAG-enhanced financial report generation

- Real-time regulatory and compliance lookup systems

Customer Service

- Knowledge base-driven chatbots

- Support ticket automation and summarization

Key Considerations for RAG Integration

RAG is not a model feature, but an application-level architecture combining:

- A retriever (searches a knowledge base or vector store)

- A generator (an LLM that synthesizes answers based on retrieved content)

When selecting models for RAG, consider:

- Context window size (e.g., GPT-4o supports up to 128k tokens)

- Latency and throughput

- API and hosting options (self-hosted vs cloud)

- Security and compliance

- Multilingual or multimodal capabilities

RAG continues to emerge as a standard pattern for high-performance, real-time, knowledge-rich AI applications across domains. Most capable LLMs can support it, provided they are paired with appropriate retrieval and orchestration infrastructure.

Explore additional inspiration from the blog’s archive. | Tech Insights

Categories: Astrology & Numerology | Daily Prompts | Law | Motivational Blogs | Motivational Quotes | Others | Personal Development | Tech Insights | Wake-Up Calls

🌐 Home | Blog | About Us | Contact| Resources

📱 Follow us: @RiseNinspireHub

© 2025 Rise&Inspire. All Rights Reserved.

Word Count:1654